Image by Author | ChatGPT

The Data Quality Bottleneck Every Data Scientist Knows

You’ve just received a new dataset. Before diving into analysis, you need to understand what you’re working with: How many missing values? Which columns are problematic? What’s the overall data quality score?

Most data scientists spend 15-30 minutes manually exploring each new dataset—loading it into pandas, running .info(), .describe(), and .isnull().sum(), then creating visualizations to understand missing data patterns. This routine gets tedious when you’re evaluating multiple datasets daily.

What if you could paste any CSV URL and get a professional data quality report in under 30 seconds? No Python environment setup, no manual coding, no switching between tools.

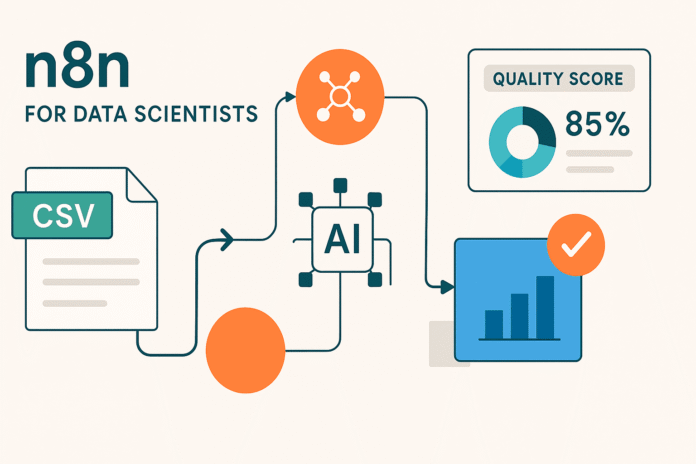

The Solution: A 4-Node n8n Workflow

n8n (pronounced “n-eight-n”) is an open-source workflow automation platform that connects different services, APIs, and tools through a visual, drag-and-drop interface. While most people associate workflow automation with business processes like email marketing or customer support, n8n can also assist with automating data science tasks that traditionally require custom scripting.

Unlike writing standalone Python scripts, n8n workflows are visual, reusable, and easy to modify. You can connect data sources, perform transformations, run analyses, and deliver results—all without switching between different tools or environments. Each workflow consists of “nodes” that represent different actions, connected together to create an automated pipeline.

Our automated data quality analyzer consists of four connected nodes:

- Manual Trigger – Starts the workflow when you click “Execute”

- HTTP Request – Fetches any CSV file from a URL

- Code Node – Analyzes the data and generates quality metrics

- HTML Node – Creates a beautiful, professional report

Building the Workflow: Step-by-Step Implementation

Prerequisites

- n8n account (free 14 day trial at n8n.io)

- Our pre-built workflow template (JSON file provided)

- Any CSV dataset accessible via public URL (we’ll provide test examples)

Step 1: Import the Workflow Template

Rather than building from scratch, we’ll use a pre-configured template that includes all the analysis logic:

- Download the workflow file

- Open n8n and click “Import from File”

- Select the downloaded JSON file – all four nodes will appear automatically

- Save the workflow with your preferred name

The imported workflow contains four connected nodes with all the complex parsing and analysis code already configured.

Step 2: Understanding Your Workflow

Let’s walk through what each node does:

Manual Trigger Node: Starts the analysis when you click “Execute Workflow.” Perfect for on-demand data quality checks.

HTTP Request Node: Fetches CSV data from any public URL. Pre-configured to handle most standard CSV formats and return the raw text data needed for analysis.

Code Node: The analysis engine that includes robust CSV parsing logic to handle common variations in delimiter usage, quoted fields, and missing value formats. It automatically:

- Parses CSV data with intelligent field detection

- Identifies missing values in multiple formats (null, empty, “N/A”, etc.)

- Calculates quality scores and severity ratings

- Generates specific, actionable recommendations

HTML Node: Transforms the analysis results into a beautiful, professional report with color-coded quality scores and clean formatting.

Step 3: Customizing for Your Data

To analyze your own dataset:

- Click on the HTTP Request node

- Replace the URL with your CSV dataset URL:

- Current:

https://raw.githubusercontent.com/fivethirtyeight/data/master/college-majors/recent-grads.csv - Your data:

https://your-domain.com/your-dataset.csv

- Current:

- Save the workflow

That’s it! The analysis logic automatically adapts to different CSV structures, column names, and data types.

Step 4: Execute and View Results

- Click “Execute Workflow” in the top toolbar

- Watch the nodes process – each will show a green checkmark when complete

- Click on the HTML node and select the “HTML” tab to view your report

- Copy the report or take screenshots to share with your team

The entire process takes under 30 seconds once your workflow is set up.

Understanding the Results

The color-coded quality score gives you an immediate assessment of your dataset:

- 95-100%: Perfect (or near perfect) data quality, ready for immediate analysis

- 85-94%: Excellent quality with minimal cleaning needed

- 75-84%: Good quality, some preprocessing required

- 60-74%: Fair quality, moderate cleaning needed

- Below 60%: Poor quality, significant data work required

Note: This implementation uses a straightforward missing-data-based scoring system. Advanced quality metrics like data consistency, outlier detection, or schema validation could be added to future versions.

Here’s what the final report looks like:

Our example analysis shows a 99.42% quality score – indicating the dataset is largely complete and ready for analysis with minimal preprocessing.

Dataset Overview:

- 173 Total Records: A small but sufficient sample size ideal for quick exploratory analysis

- 21 Total Columns: A manageable number of features that allows focused insights

- 4 Columns with Missing Data: A few select fields contain gaps

- 17 Complete Columns: The majority of fields are fully populated

Testing with Different Datasets

To see how the workflow handles varying data quality patterns, try these example datasets:

- Iris Dataset (

https://raw.githubusercontent.com/uiuc-cse/data-fa14/gh-pages/data/iris.csv) typically shows a perfect score (100%) with no missing values. - Titanic Dataset (

https://raw.githubusercontent.com/datasciencedojo/datasets/master/titanic.csv) demonstrates a more realistic 67.6% score due to strategic missing data in columns like Age and Cabin. - Your Own Data: Upload to Github raw or use any public CSV URL

Based on your quality score, you can determine next steps: above 95% means proceed directly to exploratory data analysis, 85-94% suggests minimal cleaning of identified problematic columns, 75-84% indicates moderate preprocessing work is needed, 60-74% requires planning targeted cleaning strategies for multiple columns, and below 60% suggests evaluating if the dataset is suitable for your analysis goals or if significant data work is justified. The workflow adapts automatically to any CSV structure, allowing you to quickly assess multiple datasets and prioritize your data preparation efforts.

Next Steps

1. Email Integration

Add a Send Email node to automatically deliver reports to stakeholders by connecting it after the HTML node. This transforms your workflow into a distribution system where quality reports are automatically sent to project managers, data engineers, or clients whenever you analyze a new dataset. You can customize the email template to include executive summaries or specific recommendations based on the quality score.

2. Scheduled Analysis

Replace the Manual Trigger with a Schedule Trigger to automatically analyze datasets at regular intervals, perfect for monitoring data sources that update frequently. Set up daily, weekly, or monthly checks on your key datasets to catch quality degradation early. This proactive approach helps you identify data pipeline issues before they impact downstream analysis or model performance.

3. Multiple Dataset Analysis

Modify the workflow to accept a list of CSV URLs and generate a comparative quality report across multiple datasets simultaneously. This batch processing approach is invaluable when evaluating data sources for a new project or conducting regular audits across your organization’s data inventory. You can create summary dashboards that rank datasets by quality score, helping prioritize which data sources need immediate attention versus those ready for analysis.

4. Different File Formats

Extend the workflow to handle other data formats beyond CSV by modifying the parsing logic in the Code node. For JSON files, adapt the data extraction to handle nested structures and arrays, while Excel files can be processed by adding a preprocessing step to convert XLSX to CSV format. Supporting multiple formats makes your quality analyzer a universal tool for any data source in your organization, regardless of how the data is stored or delivered.

Conclusion

This n8n workflow demonstrates how visual automation can streamline routine data science tasks while maintaining the technical depth that data scientists require. By leveraging your existing coding background, you can customize the JavaScript analysis logic, extend the HTML reporting templates, and integrate with your preferred data infrastructure — all within an intuitive visual interface.

The workflow’s modular design makes it particularly valuable for data scientists who understand both the technical requirements and business context of data quality assessment. Unlike rigid no-code tools, n8n allows you to modify the underlying analysis logic while providing visual clarity that makes workflows easy to share, debug, and maintain. You can start with this foundation and gradually add sophisticated features like statistical anomaly detection, custom quality metrics, or integration with your existing MLOps pipeline.

Most importantly, this approach bridges the gap between data science expertise and organizational accessibility. Your technical colleagues can modify the code while non-technical stakeholders can execute workflows and interpret results immediately. This combination of technical sophistication and user-friendly execution makes n8n ideal for data scientists who want to scale their impact beyond individual analysis.

Born in India and raised in Japan, Vinod brings a global perspective to data science and machine learning education. He bridges the gap between emerging AI technologies and practical implementation for working professionals. Vinod focuses on creating accessible learning pathways for complex topics like agentic AI, performance optimization, and AI engineering. He focuses on practical machine learning implementations and mentoring the next generation of data professionals through live sessions and personalized guidance.